Remember Madonna’s unforgettable performance at Eurovision 2019 in Tel Aviv? This one was funded by philanthropist Sylvan Adams at an estimated $1.3 million that instantly became one of the most controversial moments in the show’s history? In contrast to expectations for a polished pop icon, her live rendition of Like a Prayer suffered from audible pitch instability, noticeable intonation issues, and vocal inaccuracies even casual listeners could detect, leading to widespread debate over social media and music forums. Many critics didn’t focus on her artistic message — instead, they asked a blunt question: Is Madonna, one of the most successful vocalists in history, no longer able to sing live at the level expected of her?

In the aftermath, countless fan‑made and studio‑adjusted versions of the performance circulated online where the vocals sounded steady and far more polished — the result of digital processing and pitch correction tools such as Auto‑Tune or similar technologies. Even though no official statement confirmed that pitch correction was used during the live show, the contrast between studio‑polished sound and raw performance highlighted the dramatic gap that modern vocal processing can create.

Here’s a comparative performance tool you can watch that illustrates the difference in vocal quality after tuning:

Madonna’s case has since become almost academic — a real‑world illustration of how vocal correction technologies can not only improve a performance but also create the illusion of vocal ability that may not be present in reality.

This is exactly where the broader discussion about AI‑based vocal correction begins: blurring the line between a voice as it naturally exists and a voice that has been reshaped, corrected, and engineered for modern listening standards.

What Is Auto‑Tune? A Brief History and Impact

Auto‑Tune is a pitch correction software originally developed in 1997 by Antares Audio Technologies and engineer Andy Hildebrand. Designed to measure and discreetly correct the pitch of vocal tracks, it allowed recordings to sound perfectly in tune without manual editing.

Initially intended as an unobtrusive production tool to fix off‑key notes and enhance musical expression, Auto‑Tune quickly evolved into a creative and stylistic device. Cher’s 1998 hit Believe was the first prominent example where the effect was pushed to extremes, producing a distinctive robotic or futuristic vocal texture, a sound that later became known as the Cher effect and inspired countless artists across genres.

While Auto‑Tune was originally meant to help vocals stay in tune, it also became a signature sound, embraced by pop, hip‑hop, R&B, and electronic artists. Artists like T‑Pain made the effect central to their musical identity. Even today, many producers and performers use Auto‑Tune not just for correction, but to create distinctive sonic experiences.

Auto‑Tune vs. AI: Evolving Vocal Correction

Over time, Auto-Tune has penetrated many genres: from rock and pop to R&B and hip-hop, including songs by Lil Wayne, Black Eyed Peas, and Kanye West. But it has also sparked debate. Critics argue that heavy reliance on pitch correction can mask imperfections, erasing nuance and emotional character from a performance. rapper Jay-Z, who created the song D.O.A. – Death Of Auto-Tune as a statement against the excessive use of this technology. Some see it as a digital prosthetic rather than an enhancement, a “Photoshop filter for music” that smooths imperfections at the expense of individuality.

Supporters say that it isn’t inherently bad, it’s just another tool in a producer’s toolkit. But the perception of artistry does change when performances are polished beyond natural human ability.

AI Tools

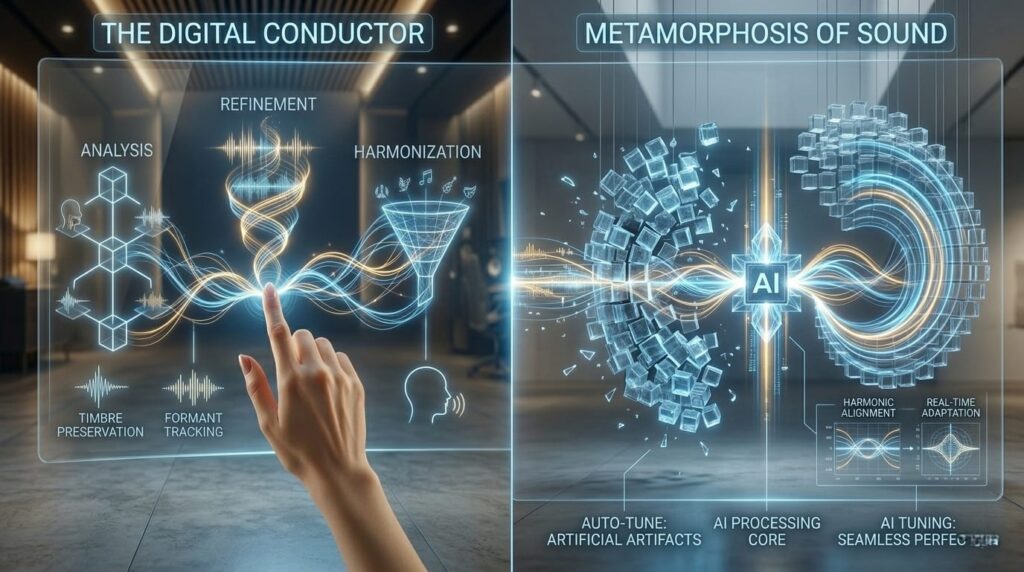

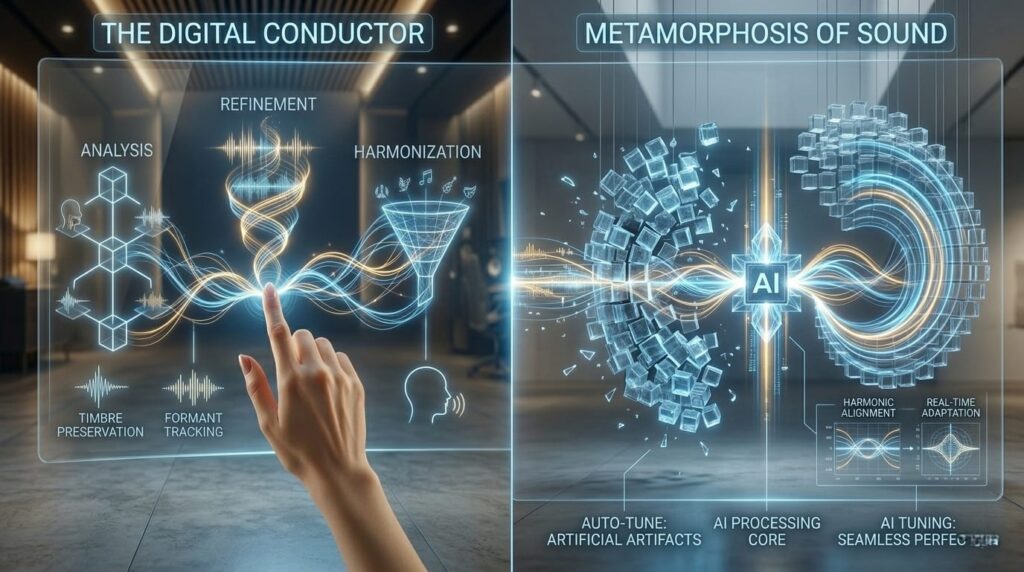

Now, modern tools powered by Artificial Intelligence have taken vocal tuning far beyond what Auto‑Tune originally achieved. These AI systems don’t simply snap off‑pitch notes to nearest semitone; they interpret the melodic context, vocal nuance, emotional delivery, and natural vocal features, allowing them to correct pitch in a way that preserves expressive variation, not just mechanical accuracy.

For example, Audimee AI vocal, is an AI‑based vocal pitch correction tools analyze recordings in detail, identifying not only pitch errors but also stylistic features, like vibrato and phrasing, to deliver results that sound more organic and expressive.

Researchers are also developing frameworks like BERT‑APC, which aim to correct pitch without relying on predefined reference notes, preserving emotional and expressive intent while aligning pitch more naturally. Unlike older correction systems, these models infer musical context and preserve expressive deviation, a major step toward retaining the human element in performance.

Auto‑Tune in Live Performance: Then and Now

At first, Auto‑Tune was mainly a studio tool. Producers subtly applied it after recording. But over the years, even live performance technologies have emerged. Tools such as Antares Auto‑Tune Live and Waves Tune Real‑Time allow vocalists to hear corrected vocals in real time during live shows, with extremely low latency so the performer perceives the corrected sound immediately.

These tools plug into live sound systems and analyze vocals on the fly, offering performers the confidence to deliver pitch‑corrected live vocals in front of audiences. This is a dramatic leap from earlier days when tuning happened only in studios.

AI Vocal Correction: The Future of Musical Expression

Today, as AI evolves, the distinction between correction and creation becomes blurrier. AI doesn’t just fix pitch, but can also preserve expressive nuance, interpret context, and generate performances that feel less like digital editing and more like artistic collaboration. Modern research and AI models are explicitly built to maintain naturalness and emotional logic, not just pitch precision.

This move from mechanical correction to contextual interpretation may be why AI‑based tools are increasingly preferred for producing vocals that still feel human, even when tuning is applied.

Is This a Good or Bad Evolution?

The debate continues. Some view AI voice correction as a way to democratize music production, literally allowing creators of all skill levels to achieve studio‑level performances. Others worry that reliance on post‑production tools will discourage vocal training and reduce the presence of genuine, imperfect human expression in music.

One thing is clear: the era of strict pitch‑only processing, the world of Auto‑Tune, has expanded into something much broader. Today’s tools can interpret, enhance, and even generate vocal performances using complex AI models that consider emotional expression and artistic intention.

Conclusion: Balancing Technology with Artistry

Auto‑Tune revolutionized music by offering a simple solution for correcting pitch and inspiring new artistic effects that influenced genres around the world. Now, AI‑based vocal technologies are taking that legacy further, offering context‑aware, emotion‑sensitive vocal tuning and transformation that preserve expressive qualities rather than simply aligning pitch.

The real question for artists and producers today isn’t whether they should use Auto‑Tune or AI. It’s how to balance the technologies with authentic artistic expression. With AI vocal tools that enhance without erasing human nuance, the future of music may not be about replacing talent, but about amplifying it in new and meaningful ways.